Agent Harnesses

What Is a Harness?

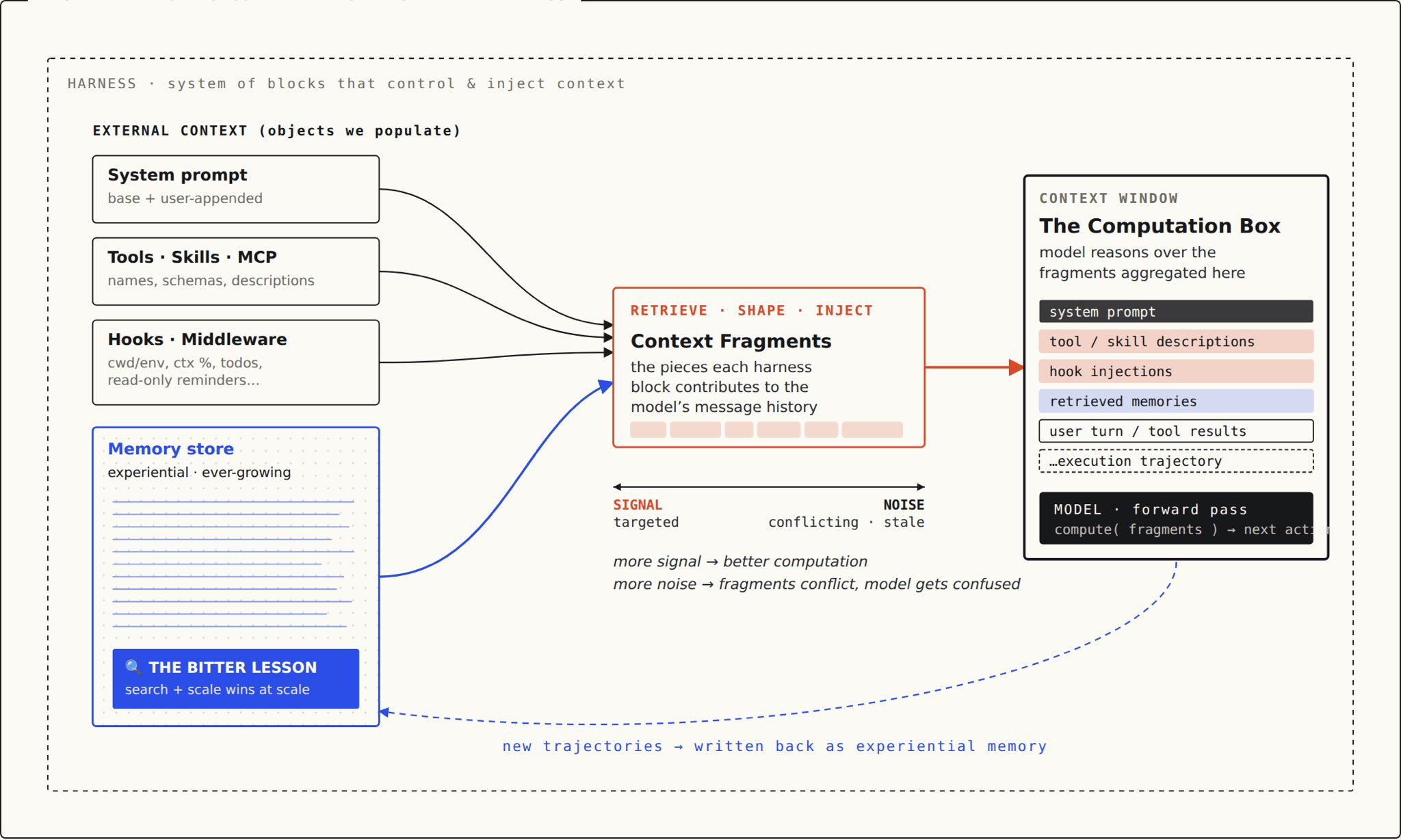

The runtime orchestration layer that wraps a reasoning loop and coordinates:

- Tool execution — file editing, bash, web search, MCP servers

- Context management — what the model sees, when, and how much

- Safety enforcement — permissions, dangerous command detection, approval flows

- Session persistence — memory, state, conversation history

- Channel integration — how humans interact (CLI, Telegram, Discord, web)

From @vtrivedy10

Per the arXiv formalization (2603.05344):

- Scaffolding = constructing the agent BEFORE the first prompt (CLAUDE.md, skills, rules, context files)

- Harness = everything that happens AFTER (tool execution, context management, safety, persistence)

The moat is the harness, not the model.

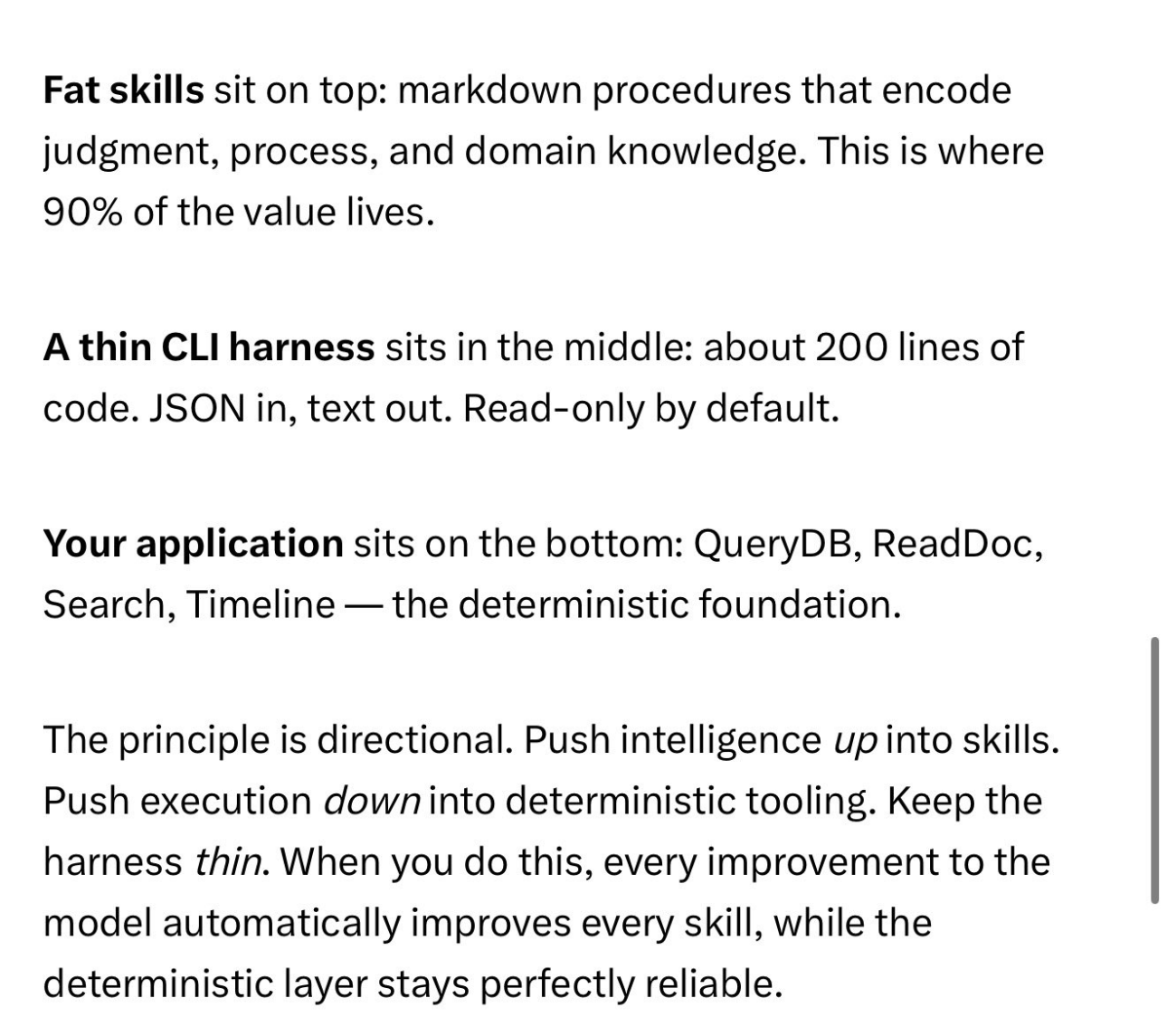

“Fat Skills, Thin Harness, Deterministic App”

From @GarryTan

Why the Harness Matters More Than the Model

Empirical evidence

LangChain’s coding agent: 52.8% → 66.5% on Terminal Bench 2.0 by changing only the harness. Same model, same prompts. The 14-point jump came entirely from orchestration changes.

Market confirmation

OpenAI open-sourced codex-plugin-cc (March 30, 2026) to invoke Codex from inside Claude Code. If models were the moat, this wouldn’t exist. Models are swappable plugins. The harness is the platform.

The Claude Code leak

When Anthropic accidentally published Claude Code’s full source (512K lines TypeScript), the community ignored the model integration and reverse-engineered the 12-layer harness system, 40+ tools, and orchestration patterns. Claw Code replicated the architecture in Python overnight. 100K GitHub stars in 24 hours.

The Two Concepts That Matter Most

1. Context Hygiene (from Dex Horthy - Context Engineering for AI Coding Workflows)

The “dumb zone”: past ~40% context window fill, output quality drops. Everything after that gets worse.

Worst context (ranked):

- Incorrect information (worst)

- Missing information

- Noise

Trajectory matters. If an agent keeps getting corrected, the conversation history trains the next token toward more mistakes. Errors compound.

The fix: compact early and often. When context fills or goes sideways, compress state to a markdown summary and start fresh. Use sub-agents for context isolation (dispatch to read a large area, return one sentence). Don’t use them as role analogues.

2. Research → Plan → Implement

Context hygiene produces a natural workflow:

- Research — read the actual codebase, compress into truthful snapshot

- Plan — exact file names, line snippets, test steps. “The dumbest model won’t screw this up.”

- Implement — follow the plan with deliberately low context

This maps directly to Spec-Driven Development and AI-Native SDLC - 2026 Analysis — specs are the plan artifact, and the harness executes against them.

Architectural Philosophies

Gateway (OpenClaw, 345K stars)

A persistent process managing routing, permissions, channels, skills, and connections. The model is one of many tools it orchestrates. 15+ platform integrations. Skills marketplace.

Memory-First (Hermes, 21.6K stars)

An agent that knows your preferences, writes its own skills, and retains context across sessions beats one that’s well-routed but forgetful. Honcho user modeling, self-improving skill library.

Minimal (Pi)

A tiny extensible harness you adapt to your workflows. No opinions, no batteries — TypeScript extensions, skills, templates. Created by Armin Ronacher. For people who want to understand and control every layer.

Orchestration (Paperclip, 43.9K stars)

The harness IS the company — org charts, budgets, governance, multiple heterogeneous agents as “employees.” Not a replacement for coding harnesses; a layer above them.

Clean-Room (Claw Code, 114K stars)

Replicate Claude Code’s harness in open source. Python/Rust rewrite, model-agnostic, independently audited.

Proxy (Claude Code Router, 31.1K stars)

Intercept the harness instead of replacing it. Route different task types to different models (cheap for simple, expensive for complex). Zero migration, full compatibility.

The Current Landscape (April 2026)

| Harness | Stars | Lang | Model Lock-in | Memory | Channels | Skills |

|---|---|---|---|---|---|---|

| Claude Code | Closed | TS | Anthropic | File-based | Via plugins | 12 (ours) |

| OpenClaw | 345K | TS | Agnostic | Persistent | 15+ native | Marketplace |

| Claw Code | 114K | Rust/Py | Agnostic | Replicated | TBD | Compatible |

| Hermes | 21.6K | Py | Agnostic | Self-improving | 15+ native | Self-evolving |

| Codex CLI | 1M devs | Rust | OpenAI | Session | CLI | Via plugins |

| Gemini CLI | - | Session | CLI | Native | ||

| Pi | - | TS | Agnostic | Extensions | CLI | Templates |

| IronClaw | - | Rust | Agnostic | Local encrypted | Fork of OpenClaw | - |

| Paperclip | 43.9K | Node | Agent-agnostic | DB-backed | Via agents | Injected |

| CCR | 31.1K | TS | Routes any | Unchanged | Unchanged | Unchanged |

Recent Events (March-April 2026)

- March 31: Claude Code source leak → Claw Code born, 100K stars in 24 hours

- April: Anthropic ships Claude Code Channels (native Telegram/Discord)

- April: Anthropic cuts off OpenClaw from Claude subscriptions

- March 30: OpenAI open-sources codex-plugin-cc (invoke Codex from Claude Code)

- March 11: Gemini CLI ships Plan Mode

Skills as the Knowledge Layer

From The Complete Guide to Building Skills for Claude - Anthropic:

Skills sit on top of the harness as the knowledge layer. The harness provides tools (what Claude can do). Skills provide workflows (how Claude should do it).

Three-level progressive disclosure:

- YAML frontmatter — always loaded, tells Claude when to use the skill

- SKILL.md body — loaded when relevant, full instructions

- Linked files — discovered on demand

From Boris Cherny - Hidden Claude Code Features Thread (Claude Code lead):

- Skills + loops = powerful automation (

/loop 5m /babysit) - Hooks for deterministic lifecycle logic (SessionStart, PreToolUse, PermissionRequest, Stop)

- Worktrees for parallel execution (

claude -w) /batchfor massive parallelized changesets

What to actually do

- Spend time on the harness, not the model. Model improvements arrive via API updates. Harness improvements require your work.

- Watch your context window. Past 40% fill, output quality drops. Compact early, use sub-agents for isolation.

- Write skills and context files. They encode what you’ve learned about your domain. This is what compounds.

- Treat the knowledge graph as the durable asset. Harnesses will be replaced. Your accumulated context won’t.

- Don’t lock into one model. Claude Code Router exists because models are swappable. The harness persists.

- Research → Plan → Implement — the workflow that emerges from taking context seriously

2026-04 Update — Harness Governance as SpecOps

From Spec-Driven Development – Adoption at Enterprise Scale (Hari Krishnan, InfoQ, Feb 2026), cross-linked into Spec-Driven Development and AI-Native SDLC - 2026 Analysis:

- Spec authoring is a first-class engineering surface. Quality practices, version control, review processes, and continuous improvement should apply to specs the same way they apply to production code. Leigh and Ray called this “SpecOps” in their related InfoQ piece.

- Bugs are spec defects, not code defects. Two gap types: spec-to-implementation (implementation diverged from a clear spec → strengthen validation agents in the harness) and intent-to-spec (use case was missed during deliberation → improve spec elicitation). Either way, the harness itself is the thing being improved, not the individual bug.

- Quality engineering moves upstream. The job shifts from validating implementations to validating the context harnesses that guide agent execution.

- Directing agent swarms is an organizational capability, not an individual one. Adrian Cockcroft framing at QCon SF: “directing swarms of agents” is a different skill than managing individual contributors, and enterprises have to install it as a capability at team level, not at developer level.

2026-04 Update — Karpathy’s Frame on Agents, Throughput, and program.md

From Karpathy - No Priors Code Agents Autoresearch:

- Token Throughput as the New Coding Bottleneck — Dec 2024 inflection, “Peter Steinberg mode” (10 agents in parallel), orchestration as the new developer skill

- Claws - Persistent Looping Agents as App Replacement — Dobby the elf experiment, apps become APIs, agent is the customer

- Auto Research - Agents as Overnight Experimentation Engines — give an objective, a budget, and walk away; agents found hyperparameter bugs in Karpathy’s hand-tuned GPT-2 repo

- Research Org as Tunable program dot md — the research org itself becomes a markdown document you can version, A/B test, and meta-optimize

- Three Waves of AI Opportunity - Unhobbling, Physical Interface, Robotics — 1M-order-of-magnitude gap between bits and atoms; almost all 2026 value is in Wave 1

2026-04 Update — The Folder Is the Agent (44-Agent Production Setup)

From The Folder Is the Agent - Kieran Klaassen (Every, April 2026):

- The Folder Is the Agent - Context Accumulation as Specialization — an agent is not a framework or protocol. It’s a folder with CLAUDE.md + accumulated docs + skills. Change the folder, not the model, and you get a different specialist. Klaassen runs 44 agents across a handful of folders — same model (Opus 4.6), different folder = different specialist (Rails engineer vs ops engineer). Dispatch layer is a Ruby daemon with file-based messaging — no custom networking, no agent-to-agent protocol. Key principle: “Build it, use it, trust it, then orchestrate it” — don’t hand a folder to automation until you’ve used it yourself and trust its flows.

2026-04 Update — AXI: Agent-Computer Interface Design, Benchmarked

From AXI Agent eXperience Interface (Kun Chen, April 2026 — 915 benchmark runs):

- AXI Principles - Agent-Ergonomic Interface Design — 10 principles for agent-ergonomic CLI (Efficiency / Robustness / Discoverability / Fallback). Benchmarked across 915 runs: same model (Claude Sonnet 4.6), only variable is interface design, 1.6x variance in cost and duration. AXI hits 100% success at lowest cost/turns/duration on both browser and GitHub benchmarks. Mechanisms: TOON format (40% token savings over JSON), combined operations (navigate + snapshot in one call), next-step hints after output.

2026-04 Update — Agent-Native Architecture Principles

From Agent-native Architectures (Dan Shipper + Claude, Every):

- Agent-Native Architecture - Five Principles for Building After Code Ends — five principles for building apps where agents are first-class: Parity (agent matches UI), Granularity (atomic tools, outcome-based features), Composability (new features = new prompts), Emergent Capability (handles the unanticipated), Improvement Over Time (context compounds). Files as the universal agent interface. The “ultimate test”: can the agent accomplish an outcome you didn’t build a feature for?

Related Notes

- Most PMs Cant Touch Production - Gus Trigos via Aman Khan — Runtime (YC): sandboxed agent sessions so PMs ship PRs directly, engineering becomes quality gate

- Agent Harness Landscape - April 2026 Update — post-leak landscape (Claw Code, Channels, OpenClaw cutoff)

- Agent Harness Comparison - Hermes vs Paperclip vs Claude Code Router — detailed feature comparison

- Dex Horthy - Context Engineering for AI Coding Workflows — context hygiene, dumb zone, Research → Plan → Implement

- Carl Vellotti - Claude Code Mastery Context Engineering — sub-agents for context isolation, builder-validator pattern

- Boris Cherny - Hidden Claude Code Features Thread — 15 features from Claude Code’s lead dev

- 5 Claude Code Patterns - Sequential to Headless — 5 escalating usage patterns

- Anthropic - Claude Managed Agents Launch — cloud-hosted meta-harness (brain/hands architecture)

- The Complete Guide to Building Skills for Claude - Anthropic — official skill architecture

- Spec-Driven Development and AI-Native SDLC - 2026 Analysis — specs as the planning layer

- Karpathy - LLM Wiki Pattern for Personal Knowledge Bases — the knowledge layer above the harness

- Karpathy - No Priors Code Agents Autoresearch — auto-research, claws, program.md pattern

- GAN-Inspired Agent Architecture - Generator Evaluator Loops — multi-agent generator/evaluator pattern inspired by GANs, from Anthropic’s long-running harness work

- Criteria-Based Grading for Subjective Agent Output — making subjective quality (design, UX) gradable through decomposed criteria with explicit weightings

- Sprint Contracts - Negotiated Agent Agreements — generator and evaluator negotiate “done” definition before each sprint

- Harness Simplification as Models Improve — the discipline of stripping scaffolding as model capabilities increase

- The 90 to 100 Percent Automation Gap — 95% automation requires 100% monitoring; only 100% lets the human walk away

- Cat Wu - Head of Product Claude Code Cowork at Anthropic — insider context on Claude Code leak (human error), OpenClaw cutoff (capacity), anti-pattern of over-customizing MCPs

- LLM Document Degradation — agentic tool use does not mitigate document corruption in delegated workflows (DELEGATE-52)

- Delegation Readiness and the Jagged Frontier — domain-specific trust calibration for AI delegation